YESTERDAY, APPLE CEO TIM COOK published an open letter opposing a court order to build the FBI a “backdoor” for the iPhone.

Cook wrote that the backdoor, which removes limitations on how often an attacker can incorrectly guess an iPhone passcode, would set a dangerous precedent and “would have the potential to unlock any iPhone in someone’s physical possession,” even though in this instance, the FBI is seeking to unlock a single iPhone belonging to one of the killers in a 14-victim mass shooting spree in San Bernardino, California, in December.

It’s true that ordering Apple to develop the backdoor will fundamentally undermine iPhone security, as Cook and other digital security advocates have argued. But it’s possible for individual iPhone users to protect themselves from government snooping by setting strong passcodes on their phones — passcodes the FBI would not be able to unlock even if it gets its iPhone backdoor.

The technical details of how the iPhone encrypts data, and how the FBI might circumvent this protection, are complex and convoluted, and are being thoroughly explored elsewhere on the internet. What I’m going to focus on here is how ordinary iPhone users can protect themselves.

The short version: If you’re worried about governments trying to access your phone, set your iPhone up with a random, 11-digit numeric passcode. What follows is an explanation of why that will protect you and how to actually do it.

If it sounds outlandish to worry about government agents trying to crack into your phone, consider that when you travel internationally, agents at the airport or other border crossings can seize, search, and temporarily retain your digital devices — even without any grounds for suspicion. And while a local police officer can’t search your iPhone without a warrant, cops have used their own digital devices to get search warrants within 15 minutes, as a Supreme Court opinion recently noted.

The most obvious way to try and crack into your iPhone, and what the FBI is trying to do in the San Bernardino case, is to simply run through every possible passcode until the correct one is discovered and the phone is unlocked. This is known as a “brute force” attack.

For example, let’s say you set a six-digit passcode on your iPhone. There are 10 possibilities for each digit in a numbers-based passcode, and so there are 106, or 1 million, possible combinations for a six-digit passcode as a whole. It is trivial for a computer to generate all of these possible codes. The difficulty comes in trying to test them.

One obstacle to testing all possible passcodes is that the iPhone intentionally slows down after you guess wrong a few times. An attacker can try four incorrect passcodes before she’s forced to wait one minute. If she continues to guess wrong, the time delay increases to five minutes, 15 minutes, and finally one hour. There’s even a setting to erase all data on the iPhone after 10 wrong guesses.

This is where the FBI’s requested backdoor comes into play. The FBI is demanding that Apple create a special version of the iPhone’s operating system, iOS, that removes the time delays and ignores the data erasure setting. The FBI could install this malicious software on the San Bernardino killer’s iPhone, brute force the passcode, unlock the phone, and access all of its data. And that process could hypothetically be repeated on anyone else’s iPhone.

(There’s also speculation that the government could make Apple alter the operation of a piece of iPhone hardware known as the Secure Enclave; for the purposes of this article, I assume the protections offered by this hardware, which would slow an attacker down even more, are not in place.)

Even if the FBI gets its way and can clear away iPhone safeguards against passcode guessing, it faces another obstacle, one that should help keep it from cracking passcodes of, say, 11 digits: It can only test potential passcodes for your iPhone using the iPhone itself; the FBI can’t use a supercomputer or a cluster of iPhones to speed up the guessing process. That’s because iPhone models, at least as far back as May 2012, have come with a Unique ID (UID) embedded in the device hardware. Each iPhone has a different UID fused to the phone, and, by design, no one can read it and copy it to another computer. The iPhone can only be unlocked when the owner’s passcode is combined with the the UID to derive an encryption key.

So the FBI is stuck using your iPhone to test passcodes. And it turns out that your iPhone is kind of slow at that: iPhones intentionally encrypt data in such a way that they must spend about 80 milliseconds doing the math needed to test a passcode, according to Apple. That limits them to testing 12.5 passcode guesses per second, which means that guessing a six-digit passcode would take, at most, just over 22 hours.

You can calculate the time for that task simply by dividing the 1 million possible six-digit passcodes by 12.5 per seconds. That’s 80,000 seconds, or 1,333 minutes, or 22 hours. But the attacker doesn’t have to try each passcode; she can stop when she finds one that successfully unlocks the device. On average, it will only take 11 hours for that to happen.

But the FBI would be happy to spend mere hours cracking your iPhone. What if you use a longer passcode? Here’s how long the FBI would need:

- seven-digit passcodes will take up to 9.2 days, and on average 4.6 days, to crack

- eight-digit passcodes will take up to three months, and on average 46 days, to crack

- nine-digit passcodes will take up to 2.5 years, and on average 1.2 years, to crack

- 10-digit passcodes will take up to 25 years, and on average 12.6 years, to crack

- 11-digit passcodes will take up to 253 years, and on average 127 years, to crack

- 12-digit passcodes will take up to 2,536 years, and on average 1,268 years, to crack

- 13-digit passcodes will take up to 25,367 years, and on average 12,683 years, to crack

It’s important to note that these estimates only apply to truly random passcodes. If you choose a passcode by stringing together dates, phone numbers, social security numbers, or anything else that’s at all predictable, the attacker might try guessing those first, and might crack your 11-digit passcode in a very short amount of time. So make sure your passcode is random, even if this means it takes extra time to memorize it. (Memorizing that many digits might seem daunting, but if you’re older than, say, 29, there was probably a time when you memorized several phone numbers that you dialed on a regular basis.)

Nerd tip: If you’re using a Mac or Linux, you can securely generate a random 11-digit passcode by opening the Terminal app and typing this command:

python -c 'from random import SystemRandom as r; print(r().randint(0,10**11-1))'

It’s also important to note that we’re assuming the FBI, or some other government agency, has not found a flaw in Apple’s security architecture that would allow them to test passcodes on their own computers or at a rate faster than 80 milliseconds per passcode.

Once you’ve created a new 11-digit passcode, you can start using it by opening the Settings app, selecting “Touch ID & Passcode,” and entering your old passcode if prompted. Then, if you have an existing passcode, select “Change passcode” and enter your old passcode. If you do not have an existing passcode, and are setting one for the first time, click “Turn passcode on.”

Then, in all cases, click “Passcode options,” select “Custom numeric code,” and then enter your new passcode.

Here are a few final tips to make this long-passcode thing work better:

- Within the “Touch ID & Passcode” settings screen, make sure to turn on the Erase Data setting to erase all data on your iPhone after 10 failed passcode attempts.

- Make sure you don’t forget your passcode, or you’ll lose access to all of the data on your iPhone.

- Don’t use Touch ID to unlock your phone. Your attacker doesn’t need to guess your passcode if she can push your finger onto the home button to unlock it instead. (At least one court has ruled that while the police cannot compel you to disclose your passcode, they can compel you to use your fingerprint to unlock your smartphone.)

- Don’t use iCloud backups. Your attacker doesn’t need to guess your passcode if she can get a copy of all the same data from Apple’s server, where it’s no longer protected by your passcode.

- Do make local backups to your computer using iTunes, especially if you are worried about forgetting your iPhone passcode. You can encrypt the backups, too.

By choosing a strong passcode, the FBI shouldn’t be able to unlock your encrypted phone, even if it installs a backdoored version of iOS on it. Not unless it has hundreds of years to spare.

IT’S EVEN WORSE THAN WE THOUGHT.

What we’re seeing right now from Donald Trump is a full-on authoritarian takeover of the U.S. government.

This is not hyperbole.

Court orders are being ignored. MAGA loyalists have been put in charge of the military and federal law enforcement agencies. The Department of Government Efficiency has stripped Congress of its power of the purse. News outlets that challenge Trump have been banished or put under investigation.

Yet far too many are still covering Trump’s assault on democracy like politics as usual, with flattering headlines describing Trump as “unconventional,” “testing the boundaries,” and “aggressively flexing power.”

The Intercept has long covered authoritarian governments, billionaire oligarchs, and backsliding democracies around the world. We understand the challenge we face in Trump and the vital importance of press freedom in defending democracy.

We’re independent of corporate interests. Will you help us?

IT’S BEEN A DEVASTATING year for journalism — the worst in modern U.S. history.

We have a president with utter contempt for truth aggressively using the government’s full powers to dismantle the free press. Corporate news outlets have cowered, becoming accessories in Trump’s project to create a post-truth America. Right-wing billionaires have pounced, buying up media organizations and rebuilding the information environment to their liking.

In this most perilous moment for democracy, The Intercept is fighting back. But to do so effectively, we need to grow.

That’s where you come in. Will you help us expand our reporting capacity in time to hit the ground running in 2026?

We’re independent of corporate interests. Will you help us?

I’M BEN MUESSIG, The Intercept’s editor-in-chief. It’s been a devastating year for journalism — the worst in modern U.S. history.

We have a president with utter contempt for truth aggressively using the government’s full powers to dismantle the free press. Corporate news outlets have cowered, becoming accessories in Trump’s project to create a post-truth America. Right-wing billionaires have pounced, buying up media organizations and rebuilding the information environment to their liking.

In this most perilous moment for democracy, The Intercept is fighting back. But to do so effectively, we need to grow.

That’s where you come in. Will you help us expand our reporting capacity in time to hit the ground running in 2026?

We’re independent of corporate interests. Will you help us?

Latest Stories

Israel’s War on Gaza

A Gay Palestinian Fled to Israel’s “Safe Haven.” Israel Tried to Exploit Him for Intelligence.

Israel bills itself as a haven for LGBTQ+ rights. Its bureaucratic system can further endanger queer Palestinian asylum-seekers.

Trials of Richard Glossip

Richard Glossip on Life After Decades on Death Row

In an exclusive interview at home in Oklahoma City, Glossip describes his first days of freedom in a world he hasn’t experienced for nearly 30 years.

Midterms 2026

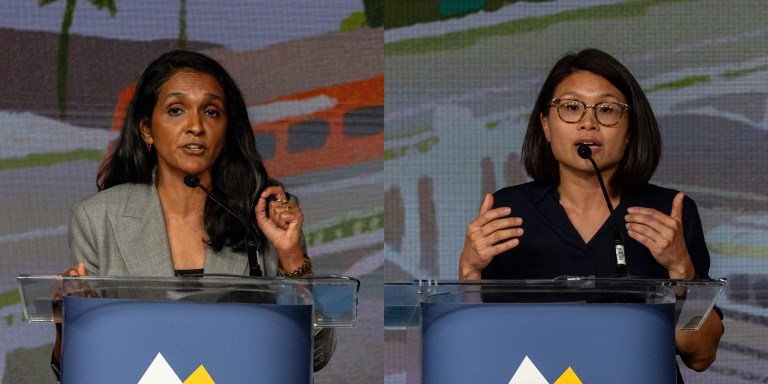

The Los Angeles Left Is at War With Itself Over the Mayor’s Race

Rae Huang supporters say Nithya Raman is compromised. Raman’s base calls Huang a spoiler. Looming over it all: reality TV star Spencer Pratt.