To hear FBI Director James Comey tell it, strong encryption stops law enforcement dead in its tracks by letting terrorists, kidnappers and rapists communicate in complete secrecy.

But that’s just not true.

In the rare cases in which an investigation may initially appear to be blocked by encryption — and so far, the FBI has yet to identify a single one — the government has a Plan B: it’s called hacking.

Hacking — just like kicking down a door and looking through someone’s stuff — is a perfectly legal tactic for law enforcement officers, provided they have a warrant.

And law enforcement officials have, over the years, learned many ways to install viruses, Trojan horses, and other forms of malicious code onto suspects’ devices. Doing so gives them the same access the suspects have to communications — before they’ve been encrypted, or after they’ve been unencrypted.

Government officials don’t like talking about it — quite possibly because hacking takes considerably more effort than simply asking a telecom provider for records. Robert Litt, general counsel to the Director of National Intelligence, recently referred to potential government hacking as a process of “slow uncertain one-offs.”

But they don’t deny it, either. Hacking is “an avenue to consider and discuss,” Amy Hess, the assistant executive director of the FBI’s Science and Technology branch, said at an encryption debate earlier this month.

The FBI “routinely identifies, evaluates, and tests potential exploits in the interest of cyber security,” bureau spokesperson Christopher Allen wrote in an email.

Hacking In Action

There are still only a few publicly known cases of government hacking, but they include examples of phishing, “watering hole” websites, and physical tampering.

Phishing involves an attacker masquerading as a trustworthy website or service and luring a victim with an email message asking the person to click on a link or update sensitive information.

FBI email released to EFF.

This was controversial and received widespread media attention because of the FBI’s choice of a faked news article as their vector of attack. But it also told us two things about FBI hacking: that the FBI has been using that particular kind of malware attack since at least 2007, and that it took the public until 2014 to find out.

A watering hole attack infects a website with malware, so that anyone who visits it is also infected, potentially allowing the attackers to identify and control the visitor’s devices.

In 2013, as part of a child-porn investigation, the FBI seized a large number of web servers and installed malware that reveals personally identifying information of online visitors to several different popular websites, including an email provider. The sites were “Tor hidden service sites,” or sites that reroute web traffic around the globe to cloak their destination. The FBI snuck in a piece of code on every single website hosted by the Freedom Hosting service, directing information about hacked visitors back to a server in northern Virginia.

This watering hole attack landed a large number of people in the FBI’s trap, most of them innocent people who hadn’t committed any crimes. And the FBI never told them about it, because it never subpoenaed their identities — even though their computers had been compromised.

The earliest reported case of the FBI using physical tampering dates back all the way to 2001, when agents broke in and installed a system to record keystrokes on Nicodemo Scarfo Jr.’s computer as part of their investigation of the American Mafia.

Confidential informants tipped the FBI off to Scarfo, the son of notorious Philly mob boss “Little Nicky,” and his alleged gambling and extortion operations in New Jersey in 1999. The FBI obtained a search warrant to enter his office and look through his computer. When they found an encrypted folder on his desktop, they installed a keystroke logger in order to get his passkey — which turned out to be Little Nicky’s prison identification number.

The Products

As Wired first reported in 2007, the FBI has its own brand of malware called the Computer and IP Address Verifier (CIPAV), which can capture information about a machine including browser activity, IP address, operating system details, and other activity. The FBI, for instance, used CIPAV to discover the identity of the teen in Washington making bomb threats.

The Electronic Frontier Foundation obtained documents from the FBI in 2011 revealing more about CIPAV, or the “web bug,” as some agents describe it in internal emails. According to the documents, the FBI and other agencies have widely used the tool since 2001 in cities including Denver, El Paso, Honolulu, Philadelphia, Houston, Cincinnati, and Miami.

In fact, EFF noted at the time: “If the FBI already has endpoint surveillance-based tools for internet wiretapping, it casts serious doubt on law enforcement’s claims of ‘going dark.'”

The FBI also uses non-proprietary hacker tools.

Wired reported in 2014 that the FBI has turned to a popular hacker app called Metasploit, which publishes security flaws. In 2012, the FBI’s “Operation Torpedo” used the app to monitor users of the Tor network. Metasploit is a sort of one-stop shop for putting together hacking code, complete with fresh exploits and payloads. Metasploit revealed that the Flash plug-in connected to the Internet directly instead of opening the secretive Tor browser, and developed code that revealed a user’s real IP address. The FBI used a watering hole attack through child porn websites to install the code on users’ computers.

Federal and local agencies have also consulted with outside contractors, including the controversial Italian firm Hacking Team, to develop and deploy malicious code. The FBI asked Hacking Team in 2012 to help it monitor Tor users. Hacking Team then updated its “Remote Control System” malware to do that.

And as the Washington Post recently reported, an Obama administration working group exploring possible approaches tech companies might use to let law enforcement unlock encrypted communications came up with one that involves the targeted installation of malware — through automatic updates.

“Virtually all consumer devices include the capability to remotely download and install updates to their operating system and applications,” the task force wrote. Law enforcement would use a “lawful process” to force tech companies to “use their remote update capability to insert law enforcement software into a targeted device.” That malware would then “enable far-reaching access to and control of the targeted device.”

The Post did not report who came up with that idea, or whether it was already in use.

And little is known about how much access the agency has to the extensive hacking capabilities developed by other government agencies, especially the National Security Agency.

The NSA has a separate program, revealed by documents provided by whistleblower Edward Snowden, that aims to hack into computers on a massive scale — automating processes to help decide which attack method to use to get into millions of computers.

The NSA has safeguards on its programs ostensibly designed to protect against hacking into Americans’ computers, but it’s unclear how those protocols work in practice.

And the national security complex has invested in malware, or “offensive” cybersecurity, on a massive scale, according to a 2013 Reuters report, in order to infiltrate computer systems overseas. Most famously, the government developed the Stuxnet virus, which was deployed to disrupt Iran’s nuclear systems.

The Time a Judge Said No

All the known cases of the FBI implementing hacking techniques so far have dealt with obtaining information about the location of a device, what programs are running, and its owner — metadata, rather than actual content of messages.

Only once, at least in the public view, has the FBI plainly asked a judge to let it hack everything: photos, messages, emails, and more. And the FBI was told no.

In that case, a hacker infiltrated a Texas resident’s email and got his bank information. The hacker used anonymizing software that made it look like he was in Southeast Asia. The FBI applied for a warrant to search the computer in a number of extremely intrusive ways, including continuous monitoring for 30 days, surreptitiously taking pictures through the computer’s webcam, obtaining photographs and logs of Internet use, and more. The judge denied the FBI’s request because the agency didn’t know where the computer was, a violation of Rule 41 of the Federal Rules of Criminal Procedure, and because the request was not specific enough to satisfy the Fourth Amendment.

It’s unclear whether or not the FBI has ever succeeded in securing a warrant to hack in such an intrusive way. But it does demonstrate that the FBI has the ability, or at least the confidence, to try.

In other warrant requests to use what it calls “Network Investigative Techniques,” the FBI has listed things it wants to access, including the computer’s IP address or the computer’s time zone information, and finished off the list by asking for “other similar identifying information on the activating computer that may assist in identifying the computer, its location, other information about the computer, and the user of the computer may be accessed by the NIT.”

The FBI does not go into details about what this other information might be.

Better Than a Back Door

FBI email released to EFF.

Mayer analyzed the few public examples of law enforcement hacking he was able to find, most of them from the FBI and DEA: five public court orders and four judicial opinions.

He also looked through declassified FBI documents and found that officials there have “theorized that the Fourth Amendment does not apply” when investigators “algorithmically constrain the information that they retrieve from a hacked device, ensuring they receive only data that is — in isolation — constitutionally unprotected,” such as a name. Sometimes the FBI deploys malware on a device in order to find out who it belongs to.

Mayer said that in internal emails, federal investigators argued that targeted hacking might not constitute a search, and hinted at past times when officials may have hacked without getting a warrant first.

“I believe that hacking can be a legitimate and effective law enforcement technique,” Mayer concluded in his paper. “But appropriate procedural protections are vital, and present practices leave much room for improvement.”

“The FBI is extremely close-mouthed” about how often they hack, Steven Bellovin, a computer science professor at Columbia, told The Intercept. In a lengthy paper Bellovin co-wrote with fellow scholars Matt Blaze, Sandy Clark, and Susan Landau, the authors write that, compared to say the “installation of global wiretapping capabilities in the infrastructure,” hacking is “significantly more difficult — more labor intensive, more expensive, and more logistically complex” — which makes it harder to conduct “against all members of a large population.” They consider that a good thing.

And they argue that hacking is a much better solution for law enforcement than weakening encryption with back doors. This way, they write, law enforcement is motivated to find holes in security, rather than mandating a new one that weakens an already imperfect security system.

IT’S EVEN WORSE THAN WE THOUGHT.

What we’re seeing right now from Donald Trump is a full-on authoritarian takeover of the U.S. government.

This is not hyperbole.

Court orders are being ignored. MAGA loyalists have been put in charge of the military and federal law enforcement agencies. The Department of Government Efficiency has stripped Congress of its power of the purse. News outlets that challenge Trump have been banished or put under investigation.

Yet far too many are still covering Trump’s assault on democracy like politics as usual, with flattering headlines describing Trump as “unconventional,” “testing the boundaries,” and “aggressively flexing power.”

The Intercept has long covered authoritarian governments, billionaire oligarchs, and backsliding democracies around the world. We understand the challenge we face in Trump and the vital importance of press freedom in defending democracy.

We’re independent of corporate interests. Will you help us?

IT’S BEEN A DEVASTATING year for journalism — the worst in modern U.S. history.

We have a president with utter contempt for truth aggressively using the government’s full powers to dismantle the free press. Corporate news outlets have cowered, becoming accessories in Trump’s project to create a post-truth America. Right-wing billionaires have pounced, buying up media organizations and rebuilding the information environment to their liking.

In this most perilous moment for democracy, The Intercept is fighting back. But to do so effectively, we need to grow.

That’s where you come in. Will you help us expand our reporting capacity in time to hit the ground running in 2026?

We’re independent of corporate interests. Will you help us?

I’M BEN MUESSIG, The Intercept’s editor-in-chief. It’s been a devastating year for journalism — the worst in modern U.S. history.

We have a president with utter contempt for truth aggressively using the government’s full powers to dismantle the free press. Corporate news outlets have cowered, becoming accessories in Trump’s project to create a post-truth America. Right-wing billionaires have pounced, buying up media organizations and rebuilding the information environment to their liking.

In this most perilous moment for democracy, The Intercept is fighting back. But to do so effectively, we need to grow.

That’s where you come in. Will you help us expand our reporting capacity in time to hit the ground running in 2026?

We’re independent of corporate interests. Will you help us?

Latest Stories

Anthropic Says We Must Stop Authoritarian AI. But What About Its Authoritarian Investors?

Anthropic wants to keep AI away from repressive regimes. But what about its part-owner, the repressive dictatorship of Abu Dhabi?

The Intercept Briefing

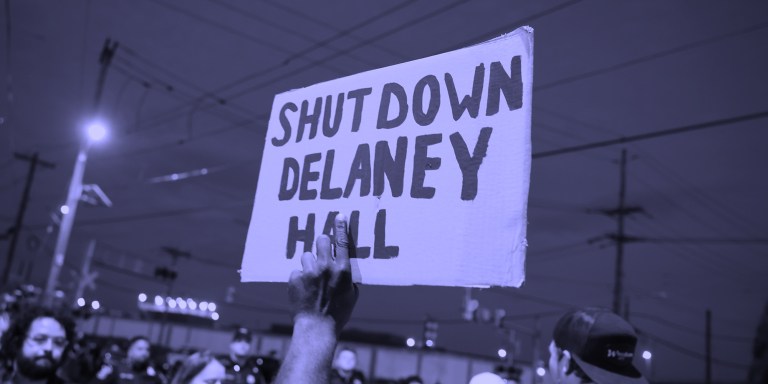

“Warehousing Human Beings”

Former immigration judge Andrea Sáenz and American Immigration Council’s Aaron Reichlin-Melnick on the conditions at Delaney Hall and other ICE detention centers across the U.S.

Trump Administration Tries to Shift Blame for Ebola Response

After cutting its support for frontline healthcare workers in Central Africa, the Trump administration is pointing fingers.