The day after the Supreme Court overturned Roe v. Wade, Facebook’s parent company, Meta, internally designated the abortion rights group Jane’s Revenge as a terrorist organization, according to company materials reviewed by The Intercept, subjecting discussion of the group and its actions to the company’s most stringent censorship policies. Experts say the decision, Meta’s first known policy response for the post-Roe era, threatens free expression around abortion rights at a critical moment.

The brief internal bulletin from Meta Platforms Inc., which owns Instagram and Facebook, was titled “[EMERGENCY Micro Policy Update] [Terrorism] Jane’s Revenge” and filed to the company’s internal Dangerous Individuals and Organizations rulebook, meaning that the abortion rights group, which has so far committed only acts of vandalism, will be treated with the same speech restrictions against “praise, support, and representation” applied to the Islamic State and Hitler. The memo, circulated to Meta moderators on June 25, describes Jane’s Revenge as “a far-left extremist group that has claimed responsibility on its website for an attack against an anti-abortion group’s office in Madison, Wisconsin in May 2022. The group is responsible for multiple arson and vandalism attacks on pro-life institutions.” Terrorist groups receive Meta’s strictest “Tier 1” speech limits, treatment the company says is reserved for the world’s most dangerous and violent entities, along with hate groups, drug cartels, and mass murderers.

Although The Intercept published a snapshot of the entire secret Dangerous Individuals and Organizations list last year, Meta does not disclose or explain additions to the public, despite the urging of scholars, activists, and its own Oversight Board. Speech advocates and civil society groups have criticized the policy for its secrecy, bias toward U.S. governmental priorities, and tendency to inaccurately delete nonviolent political speech. According to Meta’s most recent quarterly transparency report, the company restored nearly half a million posts between January and March in the terrorism category alone after determining that they had been censored erroneously.

Discussion of Jane’s Revenge was already technically subject to Tier 1 censorship stemming from another previously unreported internal speech restriction enacted by Meta last month. In May, just days after Politico published a leaked Supreme Court decision auguring the reversal of Roe v. Wade, the office of Wisconsin Family Action, an anti-abortion group, was vandalized. The very next day, Meta silently banned its roughly 2 billion users from “praising, supporting, or representing” the vandalism or its perpetrators, according to company materials reviewed by The Intercept. While these event-based restrictions are often temporary, the more recent use of the formal “terror” label suggests a more permanent policy position.

“This designation is difficult to square with Meta’s placement of the Oath Keepers and Three Percenters in Tier 3, which is subject to far fewer restrictions, despite their role organizing and participating in the January 6 Capitol attack,” said Mary Pat Dwyer, academic program director of Georgetown Law School’s Institute for Technology Law and Policy. “And while it’s possible Meta has moved those groups into Tier 1 more recently, that only highlights the lack of transparency into when and how these decisions, which have a huge impact on people’s abilities to discuss current events and important political issues, are made.”

The Wisconsin incident, which consisted of a small fire and graffiti denouncing the group’s anti-abortion stance, resulted in only minor property damage to the empty office. But the vandalism was rapidly designated a “Violating Violent Event,” a kind of ad hoc speech restriction that Meta distributes to its content moderation staff to limit discussion across its platforms in response to breaking news and various international crises, typically prominent events like the January 6, 2021, riot at the Capitol, terrorism, public shootings, or ethnic bloodshed.

“We are internally classifying this as a Violating Violent Event (General Designation),” reads the May 11 internal memo, obtained by The Intercept. “All content praising, supporting or representing the event and/or perpetrator(s) should be removed from the platform.” The dispatch instructed moderators to censor depiction and discussion of the vandalism under the Dangerous Individuals and Organizations policy framework, which restricts speech about violent actors like terror cells, neo-Nazis, and drug cartels. “The office of a conservative political organization that lobbies against abortion rights was vandalized and damaged by fire in Madison, Wisconsin,” the memo continued. “A group called Jane’s Revenge took credit for the attack.” The number of victims of the “Violating Violent Event” is marked as “0.”

The Wisconsin Family Action designation is notable not only for the relative low severity of the attack itself, which Madison police are investigating as an act of arson, but also because it marks a rare foray by Facebook into limiting speech around abortion. Striking as well is the company’s choice to censor abortion rights action, even destructive action, given that throughout the long history of the American abortion debate, the overwhelming majority of violence has been conducted by those seeking to thwart access to the procedure via bombings and assassinations, not expand it. Earlier this month, Axios reported that “assaults directed at abortion clinic staff and patients increased 128% last year over 2020,” according to a report from the National Abortion Federation. And yet of the more than 4,000 names on the company’s Dangerous Individuals and Organizations list, only two are associated with anti-abortion violence or terrorism: the Army of God Christian terrorist cell and one of its affiliates, the notorious bomber Eric Rudolph. While extremely little is known about Jane’s Revenge, including whether the vandalism is even being committed by the same actors and to what extent it is even a group, prominent right-wing politicians have begun demanding that the property damage be treated as domestic terrorism, a stance now essentially endorsed by Meta.

But the company also appears to have avoided censoring discussion of more recent anti-abortion acts comparable to the Wisconsin fire. On New Year’s Eve, arsonists destroyed a Planned Parenthood clinic in Knoxville, Tennessee, that had been riddled with bullets earlier in the year on the anniversary of the Roe v. Wade ruling. According to multiple sources familiar with Facebook’s content moderation policies, who spoke on the condition of anonymity because they are not permitted to speak to the press, the New Year’s Eve Planned Parenthood torching was never similarly designated a “Violating Violent Event.” While anti-abortion advocates are still barred from inciting further violence against Planned Parenthood clinics (or anything else), Meta users now have far greater latitude to discuss — or even praise — that instance of anti-abortion violence than comparable acts from the other side.

The frequently malfunctioning nature of Facebook’s global censorship rules also means that the Wisconsin-specific update and more recent terror label, even if intended only to thwart future real-world acts of violence from either side of the abortion debate, could end up stifling legitimate political speech. While the company’s general purpose “Community Standards” rulebook places a blanket prohibition against any explicit calls for violence, only explicitly flagged people, groups, and events are subject to Meta’s far more stringent bans against “praise, support, and representation,” restrictions that bar users from quoting, depicting, or speaking positively of the entity or action in question. But the ambiguous formulation and frequently uneven enforcement of these rules means that speech far short of crossing the red line of violent incitement is subject to deletion. The Dangerous Individuals and Organizations ban on “praise, support, and representation” has been frequently cited by Facebook when deleting posts documenting or protesting Israeli state violence against Palestinians, for example, instances of which have at times been designated “Violating Violent Events” as well.

Jane’s Revenge is poorly understood, controversial, and subject to intense debate at precisely the time the Dangerous Individuals and Organizations designations mean that billions of people are limited in what they can say about the perpetrators, their motives, or their methods. Anything that could be construed as “praise,” however tentative, risks deletion. Indeed, even Facebook’s public description of the “praise, support, and representation” standard notes that any posts “Legitimizing the cause of a designated entity by making claims that their hateful, violent, or criminal conduct is legally, morally, or otherwise justified or acceptable” are prohibited.

“There are legitimate concerns that this might shut down debate.”

The company’s internal overview of the “praise” standard, obtained and published by The Intercept last year, directs moderators to delete anything that “engages in value-based statements and emotive argument” or “seeks to make others think more positively” of the sanctioned entity or event. While these internal rules permit “Academic debate and informative, educational discourse” of a violent entity or event, what meets the threshold for “academic debate” or “informative discourse” is left to Facebook’s thousands of overworked, low-paid hourly contractors to determine.

Content moderation experts who spoke to The Intercept said the policy threatens discussion and debate of abortion rights protests at a time when such speech is of profound national importance. “What we’ve seen in the past is that when Facebook bans certain types of harmful speech, they often catch counterspeech and other types of commentary in their content moderation net,” said Jillian York, director for international freedom of expression at the Electronic Frontier Foundation. “For example, efforts to ban terrorist content often result in the removal of counterspeech against terrorism or the sharing of documentation. The use of automated technologies only exacerbates this; therefore, it isn’t difficult to imagine that an attempt to ban vandalism against an anti-abortion group could also ban legitimate speech against such a group.”

Evelyn Douek, a Harvard Law School lecturer and fellow with the Knight First Amendment Institute, described the ad hoc censorship of “violating events” via the Dangerous Individuals and Organizations framework as “extremely capacious” and “one of Facebook’s most controversial and problematic policies,” both because these designations are made in secret and because they are so likely to constitute subjective political determinations. While Meta moderators are provided with an extensive rulebook containing this designation and countless others, the combination of the company’s increasing reliance on automated algorithmic content screening and the personal judgment calls of low-paid, overworked contractors creates erratic, faulty results. “There are legitimate concerns that this might shut down debate,” said Douek.

“Ukrainians get to say violent shit, Palestinians don’t. White supremacists do, pro-choice people don’t.”

Douek said the opacity of the censorship policy, paired with Facebook’s “incredibly blunt and error-prone” enforcement of speech restrictions, poses a threat to political discussion and debate around both abortion per se and the broader reproductive rights movement. Even those who don’t condone the methods of Jane’s Revenge have an interest in talking about them and perhaps even entertaining them: There is a vast universe of discourse about political direct action and violence, even vandalism, that isn’t in and of itself incitement, a swath of speech that could be vacuumed up by Facebook’s bludgeon approach to speech rules. “[Saying] you support the goals, the underlying policy of what Jane’s Revenge is fighting for, even if you disagree with their tactics, there’s all sorts of conversation here that we have about lots of different groups in society on the margins that I’m worried about losing.”

Significant as well is the fact that a free expression around relatively minor acts of violence would not only be censored in the first place but also subjected to the same limits Facebook uses for Al Qaeda and the Third Reich. “It’s somewhat remarkable that this act of vandalism was so quickly added to the list. It really is intended to be reserved for the most serious kind of incidents” like hate crimes, gun massacres, and terrorist attacks, Douek explained, “a policy that’s really targeted at the worst of the worst.” The decision to censor free discussion of Jane’s Revenge, responsible for a failed firebombing and a series of threatening graffiti incidents, makes the fact that Facebook did not similarly limit discussion of the Tennessee Planned Parenthood arson even more puzzling. Douek and York place that decision in a long history of Facebook putting its finger on the scales of political discourse in a way that often appears ideologically motivated, or on other occasions completely arbitrary. “It’s precisely the issue raised by their constant picking and choosing of ‘winners,’” York told The Intercept. “Ukrainians get to say violent shit, Palestinians don’t. White supremacists do, pro-choice people don’t.”

In an email to The Intercept, Meta spokesperson Devon Kearns confirmed the terror designation of Jane’s Revenge and said that the company “will remove content that praises, supports, or represents the organization.” Kearns stated that the company has a multifaceted process when determining which people and groups are restricted under the Dangerous Organizations policy, but did not say what it was or why Jane’s Revenge had been flagged but not other actors committing violence to advance their stance on abortion. Kearns further noted that users may appeal deletions made through the Dangerous Organizations policy if they believe it was made in error.

Assessing the merits of a decision made and implemented in secret is exceedingly difficult. Although Meta provides a generalized, big-picture overview of what sort of speech is barred from its platforms with a handful of uncontroversial examples (e.g., “If you want to fight for the Caliphate, DM me”), the specifics of the rules are concealed from the billions of people expected to heed them, as is any rationale as to why the rules were drafted in the first place. York told The Intercept that the Jane’s Revenge move is another indication that Meta needs to “immediately institute the Santa Clara Principles,” a content moderation policy charter that mandates “clear and precise rules and policies relating to when action will be taken with respect to users’ content or accounts,” among many other items.

Without the entirety of the company’s rules and their justification provided to the public, Meta, which exercises an enormous degree of control over what speech is allowed on the internet, leaves billions posting in the dark. Meta’s claim has always been that it takes no sides on any issue and only deletes speech in the name of safety, a claim the public generally has to take as an article of faith given the company’s deep secrecy in both what the rules are and how they’re enforced. “For a platform that is consistently insisting that it’s neutral and doesn’t have its finger on the scale, it’s really incumbent on Meta to be much more forthcoming,” Douek added. “These are highly charged political decisions, and they need to be able to defend them.”

IT’S EVEN WORSE THAN WE THOUGHT.

What we’re seeing right now from Donald Trump is a full-on authoritarian takeover of the U.S. government.

This is not hyperbole.

Court orders are being ignored. MAGA loyalists have been put in charge of the military and federal law enforcement agencies. The Department of Government Efficiency has stripped Congress of its power of the purse. News outlets that challenge Trump have been banished or put under investigation.

Yet far too many are still covering Trump’s assault on democracy like politics as usual, with flattering headlines describing Trump as “unconventional,” “testing the boundaries,” and “aggressively flexing power.”

The Intercept has long covered authoritarian governments, billionaire oligarchs, and backsliding democracies around the world. We understand the challenge we face in Trump and the vital importance of press freedom in defending democracy.

We’re independent of corporate interests. Will you help us?

IT’S BEEN A DEVASTATING year for journalism — the worst in modern U.S. history.

We have a president with utter contempt for truth aggressively using the government’s full powers to dismantle the free press. Corporate news outlets have cowered, becoming accessories in Trump’s project to create a post-truth America. Right-wing billionaires have pounced, buying up media organizations and rebuilding the information environment to their liking.

In this most perilous moment for democracy, The Intercept is fighting back. But to do so effectively, we need to grow.

That’s where you come in. Will you help us expand our reporting capacity in time to hit the ground running in 2026?

We’re independent of corporate interests. Will you help us?

I’M BEN MUESSIG, The Intercept’s editor-in-chief. It’s been a devastating year for journalism — the worst in modern U.S. history.

We have a president with utter contempt for truth aggressively using the government’s full powers to dismantle the free press. Corporate news outlets have cowered, becoming accessories in Trump’s project to create a post-truth America. Right-wing billionaires have pounced, buying up media organizations and rebuilding the information environment to their liking.

In this most perilous moment for democracy, The Intercept is fighting back. But to do so effectively, we need to grow.

That’s where you come in. Will you help us expand our reporting capacity in time to hit the ground running in 2026?

We’re independent of corporate interests. Will you help us?

Latest Stories

Trials of Richard Glossip

Richard Glossip on Life After Decades on Death Row

In an exclusive interview at home in Oklahoma City, Glossip describes his first days of freedom in a world he hasn’t experienced for nearly 30 years.

Midterms 2026

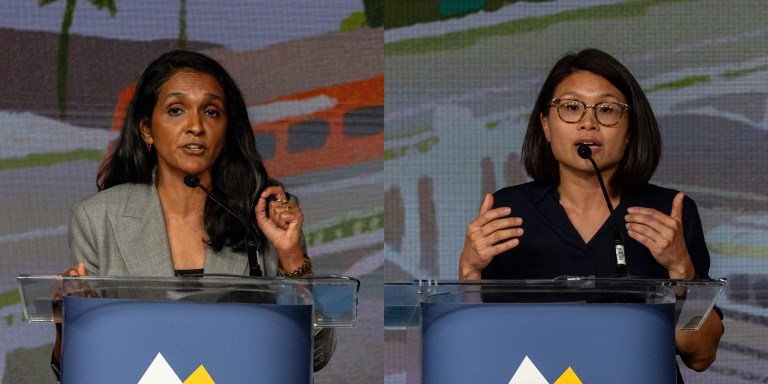

The Los Angeles Left Is at War With Itself Over the Mayor’s Race

Rae Huang supporters say Nithya Raman is compromised. Raman’s base calls Huang a spoiler. Looming over it all: reality TV star Spencer Pratt.

Chilling Dissent

ICE Pepper-Sprayed, Beat Detainees for Protesting “Horrific Conditions” In Delaney Hall Jail

Detainees told a visiting member of Congress that the attacks were “retribution for the ongoing hunger strike.”