Part 2

Experimenting With Disaster

In America’s biolabs, hundreds of accidents have gone undisclosed to the public.

At the moment that the ferret bit him, the researcher was smack in the middle of Manhattan, in a lab one block from Central Park’s East Meadow. It was the Friday afternoon before Labor Day in 2011, and people were rushing out of the city for a long weekend. Three days earlier, the ferret had been inoculated with a recombinant strain of 1918 influenza, which killed between 20 and 50 million people when it swept through the world at the end of World War I. To prevent it from sparking another pandemic, 1918 influenza is studied under biosafety level 3 conditions, the second-tightest of biosafety controls available. The researcher at Mount Sinai School of Medicine (now Icahn School of Medicine at Mount Sinai) was wearing protective equipment, including two pairs of gloves. But the ferret bit hard enough to pierce through both pairs, breaking the skin of his left thumb.

The flu is typically transmitted through respiratory droplets, and an animal bite is unlikely to infect a scientist. But with a virus as devastating as 1918 flu, scientists are not supposed to take any chances. The researcher squeezed blood out of the wound, washed it with an ethanol solution, showered, and left the lab. A doctor gave him a flu shot and prescribed him Tamiflu. Then, after checking that he lived alone, a Mount Sinai administrator sent him home to quarantine for a week, unsupervised, in the most densely populated city in the United States. As documents obtained by The Intercept show, staff told him to take his temperature two times a day and to wear an N95 respirator if he got sick and needed to leave for medical care.

NIH guidelines say that only people exposed through their respiratory tract or mucous membranes need to be isolated in a dedicated facility, rather than at home. But some experts contend that the protocols governing research with the most dangerous pathogens should be stronger. “That is a pretty significant biosafety breach,” said Gregory Koblentz, director of the Biodefense Graduate Program at George Mason University’s Schar School of Policy and Government. Simon Wain-Hobson, a virologist at the Pasteur Institute in Paris, agreed: “Say the risk was 0.1 percent. But if he just happened to be unlucky, then the consequences would be absolutely gigantic.” A researcher stuck in a small apartment in New York City might be tempted to venture outside to get food or fresh air, he added.

Jesse Bloom, an evolutionary virologist at Fred Hutchinson Cancer Center, said that Mount Sinai’s response seemed appropriate. But, he said, the episode shows that “accidents sometimes happen even where there isn’t negligence.” In his view, the solution was simpler: 1918 influenza is so dangerous that experiments with it shouldn’t be done at all.

-

The Intercept obtained over 5,500 pages of NIH documents, including 18 years of laboratory incident reports, detailing hundreds of accidents.

-

In one breach, a ferret inoculated with the recreated 1918 influenza virus bit a researcher at the Mount Sinai School of Medicine in New York City. The researcher was sent home to quarantine.

-

Some feel that 1918 influenza, which killed between 20 and 50 million people, is so dangerous that experiments with it should not be done.

-

The documents reviewed by The Intercept show broad variation in how seriously scientists and biosafety officers treated errors and accidents.

Adolfo García-Sastre, the lab’s principal investigator, knew firsthand how work with the 1918 flu virus could spark controversy. In 2005, he was part of a team that reconstructed the virus in order to study how it had become so devastating. The effort was the culmination of an outlandish journey, which started when a Swedish microbiologist trekked to Alaska to take a sample of the virus from the corpse of a 1918 flu victim; she had been buried in a mass grave after the virus wiped out most of her village, and her body was preserved in the permafrost. Using that and other samples, scientists spent years sequencing parts of the virus, eventually sequencing the whole genome. García-Sastre and collaborators then used a technique called reverse genetics to make a copy of the virus’s DNA, laying the groundwork for recreating the virus. (The actual reconstruction of the virus was done at a Centers for Disease Control and Prevention lab in Atlanta.) When the team studied the virus in mice, they found that it was incredibly lethal. Some mice died within three days of infection.

Furor ensued. Biosafety proponents argued that the risk of accidental release was not worth taking. No one really knew how potent the virus would be in modern times. Did we want to find out?

The ferret bite happened six years later but has not been publicized until now. For some, it is a stark example of the risks that accompany research on dangerous pathogens.

The mishap and hundreds of others are recorded in more than 5,500 pages of National Institutes of Health documents obtained under the Freedom of Information Act, detailing accidents between 2004 and 2021. The Intercept requested some of the reports directly, while Edward Hammond, former director of the transparency group the Sunshine Project, and Lynn Klotz, senior science fellow at the Center for Arms Control and Non-Proliferation, separately requested and provided others.

The documents show that accidents happen with risky research even at highly secure labs. NIH recently convened an advisory panel to consider how it regulates such experiments.

In 2017, following a protracted controversy over experiments in which scientists tweaked the H5N1 avian influenza virus to make it more transmissible in ferrets, the Department of Health and Human Services adopted new oversight of research on pathogens with the capacity to spark a pandemic. Those guidelines require experiments that are “reasonably anticipated” to confer dangerous new traits to so-called “potential pandemic pathogens” — or create new ones — to undergo a special review process in order to get NIH funding. But as The Intercept has reported, the policy has been unevenly applied.

Some experts are calling for other biosafety policies, such as those outlining what to do after a lab accident, to be tightened as well. “A lot of our talk now is about potential pandemic pathogens and risks around that,” said Koblentz of the ferret bite. “But the 1918 flu was a known pandemic pathogen. That should have the highest possible level of biosafety and measures taken in the event of an accident or a suspected or known exposure.”

“The downside with that type of pandemic pathogen is so high that it just doesn’t seem to me that there’s any level at which it’s worth it.”

Mount Sinai and García-Sastre did not respond to requests to comment. Mount Sinai reported the ferret bite to NIH, as well as to the CDC and the U.S. Department of Agriculture, as required under a program governing the use of certain toxins called select agents.

“[I]solation in a predetermined facility was not necessary because an animal bite did not meet the definition of known laboratory exposure with a high risk of infection,” wrote Ryan Bayha, a spokesperson for NIH’s Office of Science Policy, in an email. (Bayha was previously an analyst with the office, and the report on the ferret bite was addressed to him.)

Bloom said that experiments with 1918 influenza are scientifically interesting. At one point, he supported doing them. But he came to change his views after considering the risks more holistically. “I now feel that experiments with actual 1918 influenza just shouldn’t be done,” he said. To him, the ferret bite shows that accidents with dangerous viruses happen at even the best, most secure labs. “It’s like a nuclear weapons accident. The downside with that type of pandemic pathogen is so high that it just doesn’t seem to me that there’s any level at which it’s worth it.”

“A Complete Farce”

Many of the biotechnology safety standards in place today trace to 1975, when a group of scientists gathered at the Asilomar Conference Center on the California coast. Advances in biology had recently made it possible to modify DNA by inserting genes from one organism into the genetic code of another, and scientists convened the International Congress on Recombinant DNA Molecules to consider the implications of such research. Though driven by concerns about ethics, the conference would come to be seen by historians and bioethicists as an elite gathering aimed in part at warding off intervention by U.S. Congress.

Three years earlier, Stanford University biochemists Paul Berg and Janet Mertz had sparked outcry when they combined genes from the gut bacteria E. coli with DNA from a type of simian virus that can cause tumors in rodents. They had planned to insert the new DNA back into E. coli, but some of their peers worried that the modified bacteria could cause cancer in lab workers. Others feared that genetically engineered organisms could be used as bioweapons. The Asilomar meeting was organized in part by scientists whose primary interest was in allowing the research to go forward. Berg, under fire, co-chaired the conference.

“They focused on this idea that research is done outside of society — that if scientists can get their act in order and govern themselves, then they don’t have to worry about the broader world,” said Sam Weiss Evans, a senior research fellow at Harvard Kennedy School’s Program on Science, Technology, and Society. “But for many citizens at the time, the issue was very different: Are these scientists going to run rampant and just do whatever they want, or is there going to be some kind of ability for us to have a check on them?”

The critics’ worst fears about carcinogenic gut bacteria did not pan out, but the notion that scientists could set their own guardrails would have long-lasting consequences. The recommendations drawn up by the delegates to the Asilomar conference became the basis for the NIH guidelines on recombinant DNA that, with some revisions, are still in place today.

In 2001, after letters laced with anthrax killed five Americans, the United States adopted new biosecurity regulations, including rules governing the use of select agents. A decade later, the H5N1 controversy spurred another layer of oversight. But in other areas, regulation is lacking, despite breakthroughs in fields like synthetic DNA.

At NIH, meanwhile, critics point to an inherent conflict of interest: The agency is charged with overseeing the same research it funds.

Institutional biosafety committees — or IBCs, review boards at universities and other institutions that evaluate potentially risky research plans for NIH compliance — are another legacy of Asilomar. Scientists devising a new experiment consider the risks and come up with ways to mitigate them: safety equipment, checks, and controls. They then propose that plan to the IBC. But there are no standards in place for an IBC to determine whether the benefits of an experiment actually justify the remaining risks — a glaring problem when it comes to pathogens like the 1918 flu virus.

“Yes, they’re all experts, and yes, they’re all trained in this type of thing, but do we really just want it to be down to a judgment call?” said Rocco Casagrande, managing director of the biosafety advisory firm Gryphon Scientific. “How do you determine if the experiment should be done, if there really aren’t any standards?”

“How do you determine if the experiment should be done, if there really aren’t any standards?”

Critics say that the IBC system, like NIH oversight, also has a conflict-of-interest problem: Research is evaluated by an institution that relies on grant funding. Some institutions even hire out IBC work to private companies.

As director of the Sunshine Project, which is now defunct, Hammond spent years pressing institutions for minutes from institutional biosafety committee meetings, which NIH requires be made available upon request. Some of the institutions he contacted could not provide them, he said. “The IBCs didn’t exist at a lot of institutions. They hadn’t met in years. They weren’t doing the oversight business. The system was just a complete farce.”

Shortly before the reconstruction of the 1918 flu virus, Hammond’s Sunshine Project published a report that singled out Mount Sinai for criticism, alleging that the institution had no IBC minutes. Earlier this year, for an investigation published by Undark, journalist Michael Schulson asked eight institutions in the New York area for IBC minutes. Mount Sinai did not provide them, Schulson told The Intercept. Mount Sinai also did not respond to a request to provide minutes to The Intercept.

The documents reviewed by The Intercept show broad variation in how seriously scientists and biosafety officers treated errors and accidents. In one report, a principal investigator apologized profusely after his IBC approval expired in the chaos of the early pandemic and his lab continued with research without renewing it. “This is completely my (PI) fault,” he wrote. “I failed my role as an effective PI this time.”

In other cases, staff appear eager to avoid responsibility. After a 2020 incident in which a researcher at the University of Wisconsin–Madison pricked themselves with a needle while working in a biosafety level 3 lab with a mouse infected with Mycobacterium tuberculosis, a biosafety officer blamed the accident on the mouse, writing, “The root cause is the natural instinct of an animal to be uncooperative with a procedure it dislikes.” (The officer wrote that “incomplete restraint” techniques contributed to the accident.)

In responding to violations, NIH can ask for changes or corrective action — and in some cases, the agency did. It can also pull funding if the guidelines aren’t met. But in 18 years of documents, The Intercept found no evidence of such extreme measures being taken. In one instance, NIH threatened to terminate funding after two incidents in a University of Wisconsin–Madison lab working with modified H5N1 avian influenza; the standoff ended with the institution adopting stricter protocols.

Regulators intent on preventing future pandemics are now exploring changes to biosafety policies. The issue has been taken up by Congress, the White House, the World Health Organization, and NIH itself. But the discussion is highly politicized, with some scientists resisting regulation and some experts pessimistic that the process will lead to real change.

One problem is a dearth of information. “We don’t have a clear picture of all accidents,” said Filippa Lentzos, an expert on biosecurity and biological threats at King’s College London. “It’s difficult to get good information on how risky stuff is, and how likely it is that you’re going to have an accident. We simply don’t have that data.” News of severe breaches sometimes leaks out in press reports. But many lab workers are graduate students. For them, speaking up about safety problems could mean career suicide.

The new documents fill in some of those gaps. While the researcher at Mount Sinai did not fall ill, in a small number of cases, accidents did lead to infection. In one instance, a researcher at Washington University of St. Louis contracted Chikungunya virus, which has sparked epidemics in Africa, after pricking herself with a needle in a biosafety level 3 lab. She only reported the accident after getting sick.

With pathogens like the 1918 flu virus, the stakes are even higher. The current system “gives a good level of review most of the time,” Bloom said. “But it’s not the kind of system that you could count on if you potentially have research that could kill 10 million people if it goes wrong.”

“There’s a lot of responsibility that comes with doing these experiments that are so high-risk,” says Lentzos. “It’s about talking through some of that. That is the biggest loophole that needs to be addressed.”

IT’S EVEN WORSE THAN WE THOUGHT.

What we’re seeing right now from Donald Trump is a full-on authoritarian takeover of the U.S. government.

This is not hyperbole.

Court orders are being ignored. MAGA loyalists have been put in charge of the military and federal law enforcement agencies. The Department of Government Efficiency has stripped Congress of its power of the purse. News outlets that challenge Trump have been banished or put under investigation.

Yet far too many are still covering Trump’s assault on democracy like politics as usual, with flattering headlines describing Trump as “unconventional,” “testing the boundaries,” and “aggressively flexing power.”

The Intercept has long covered authoritarian governments, billionaire oligarchs, and backsliding democracies around the world. We understand the challenge we face in Trump and the vital importance of press freedom in defending democracy.

We’re independent of corporate interests. Will you help us?

IT’S BEEN A DEVASTATING year for journalism — the worst in modern U.S. history.

We have a president with utter contempt for truth aggressively using the government’s full powers to dismantle the free press. Corporate news outlets have cowered, becoming accessories in Trump’s project to create a post-truth America. Right-wing billionaires have pounced, buying up media organizations and rebuilding the information environment to their liking.

In this most perilous moment for democracy, The Intercept is fighting back. But to do so effectively, we need to grow.

That’s where you come in. Will you help us expand our reporting capacity in time to hit the ground running in 2026?

We’re independent of corporate interests. Will you help us?

I’M BEN MUESSIG, The Intercept’s editor-in-chief. It’s been a devastating year for journalism — the worst in modern U.S. history.

We have a president with utter contempt for truth aggressively using the government’s full powers to dismantle the free press. Corporate news outlets have cowered, becoming accessories in Trump’s project to create a post-truth America. Right-wing billionaires have pounced, buying up media organizations and rebuilding the information environment to their liking.

In this most perilous moment for democracy, The Intercept is fighting back. But to do so effectively, we need to grow.

That’s where you come in. Will you help us expand our reporting capacity in time to hit the ground running in 2026?

We’re independent of corporate interests. Will you help us?

Latest Stories

Trials of Richard Glossip

Richard Glossip on Life After Decades on Death Row

In an exclusive interview at home in Oklahoma City, Glossip describes his first days of freedom in a world he hasn’t experienced for nearly 30 years.

Midterms 2026

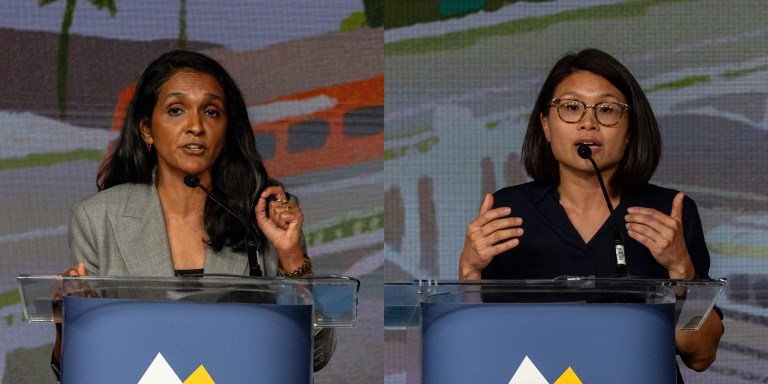

The Los Angeles Left Is at War With Itself Over the Mayor’s Race

Rae Huang supporters say Nithya Raman is compromised. Raman’s base calls Huang a spoiler. Looming over it all: reality TV star Spencer Pratt.

Chilling Dissent

ICE Pepper-Sprayed, Beat Detainees for Protesting “Horrific Conditions” In Delaney Hall Jail

Detainees told a visiting member of Congress that the attacks were “retribution for the ongoing hunger strike.”