A program spearheaded by the World Bank that uses algorithmic decision-making to means-test poverty relief money is failing the very people it’s intended to protect, according to a new report by Human Rights Watch. The anti-poverty program in question, known as the Unified Cash Transfer Program, was put in place by the Jordanian government.

Having software systems make important choices is often billed as a means of making those choices more rational, fair, and effective. In the case of the poverty relief program, however, the Human Rights Watch investigation found the algorithm relies on stereotypes and faulty assumptions about poverty.

“Its formula also flattens the economic complexity of people’s lives into a crude ranking.”

“The problem is not merely that the algorithm relies on inaccurate and unreliable data about people’s finances,” the report found. “Its formula also flattens the economic complexity of people’s lives into a crude ranking that pits one household against another, fueling social tension and perceptions of unfairness.”

The program, known in Jordan as Takaful, is meant to solve a real problem: The World Bank provided the Jordanian state with a multibillion-dollar poverty relief loan, but it’s impossible for the loan to cover all of Jordan’s needs.

Without enough cash to cut every needy Jordanian a check, Takaful works by analyzing the household income and expenses of every applicant, along with nearly 60 socioeconomic factors like electricity use, car ownership, business licenses, employment history, illness, and gender. These responses are then ranked — using a secret algorithm — to automatically determine who are the poorest and most deserving of relief. The idea is that such a sorting algorithm would direct cash to the most vulnerable Jordanians who are in most dire need of it. According to Human Rights Watch, the algorithm is broken.

The rights group’s investigation found that car ownership seems to be a disqualifying factor for many Takaful applicants, even if they are too poor to buy gas to drive the car.

Similarly, applicants are penalized for using electricity and water based on the presumption that their ability to afford utility payments is evidence that they are not as destitute as those who can’t. The Human Rights Watch report, however, explains that sometimes electricity usage is high precisely for poverty-related reasons. “For example, a 2020 study of housing sustainability in Amman found that almost 75 percent of low-to-middle income households surveyed lived in apartments with poor thermal insulation, making them more expensive to heat.”

In other cases, one Jordanian household may be using more electricity than their neighbors because they are stuck with old, energy-inefficient home appliances.

Beyond the technical problems with Takaful itself are the knock-on effects of digital means-testing. The report notes that many people in dire need of relief money lack the internet access to even apply for it, requiring them to find, or pay for, a ride to an internet café, where they are subject to further fees and charges to get online.

“Who needs money?” asked one 29-year-old Jordanian Takaful recipient who spoke to Human Rights Watch. “The people who really don’t know how [to apply] or don’t have internet or computer access.”

Human Rights Watch also faulted Takaful’s insistence that applicants’ self-reported income match up exactly with their self-reported household expenses, which “fails to recognize how people struggle to make ends meet, or their reliance on credit, support from family, and other ad hoc measures to bridge the gap.”

The report found that the rigidity of this step forced people to simply fudge the numbers so that their applications would even be processed, undermining the algorithm’s illusion of objectivity. “Forcing people to mold their hardships to fit the algorithm’s calculus of need,” the report said, “undermines Takaful’s targeting accuracy, and claims by the government and the World Bank that this is the most effective way to maximize limited resources.”

The report, based on 70 interviews with Takaful applicants, Jordanian government workers, and World Bank personnel, emphasizes that the system is part of a broader trend by the World Bank to popularize algorithmically means-tested social benefits over universal programs throughout the developing economies in the so-called Global South.

Confounding the dysfunction of an algorithmic program like Takaful is the increasingly held naïve assumption that automated decision-making software is so sophisticated that its results are less likely to be faulty. Just as dazzled ChatGPT users often accept nonsense outputs from the chatbot because the concept of a convincing chatbot is so inherently impressive, artificial intelligence ethicists warn the veneer of automated intelligence surrounding automated welfare distribution leads to a similar myopia.

The Jordanian government’s official statement to Human Rights Watch defending Takaful’s underlying technology provides a perfect example: “The methodology categorizes poor households to 10 layers, starting from the poorest to the least poor, then each layer includes 100 sub-layers, using statistical analysis. Thus, resulting in 1,000 readings that differentiate amongst households’ unique welfare status and needs.”

“These are technical words that don’t make any sense together.”

When Human Rights Watch asked the Distributed AI Research Institute to review these remarks, Alex Hanna, the group’s director of research, concluded, “These are technical words that don’t make any sense together.” DAIR senior researcher Nyalleng Moorosi added, “I think they are using this language as technical obfuscation.”

As is the case with virtually all automated decision-making systems, while the people who designed Takaful insist on its fairness and functionality, they refuse to let anyone look under the hood. Though it’s known Takaful uses 57 different criteria to rank poorness, the report notes that the Jordanian National Aid Fund, which administers the system, “declined to disclose the full list of indicators and the specific weights assigned, saying that these were for internal purposes only and ‘constantly changing.’”

While fantastical visions of “Terminator”-like artificial intelligences have come to dominate public fears around automated decision-making, other technologists argue civil society ought to focus on real, current harms caused by systems like Takaful, not nightmare scenarios drawn from science fiction.

So long as the functionality of Takaful and its ilk remain government and corporate secrets, the extent of those risks will remain unknown.

IT’S EVEN WORSE THAN WE THOUGHT.

What we’re seeing right now from Donald Trump is a full-on authoritarian takeover of the U.S. government.

This is not hyperbole.

Court orders are being ignored. MAGA loyalists have been put in charge of the military and federal law enforcement agencies. The Department of Government Efficiency has stripped Congress of its power of the purse. News outlets that challenge Trump have been banished or put under investigation.

Yet far too many are still covering Trump’s assault on democracy like politics as usual, with flattering headlines describing Trump as “unconventional,” “testing the boundaries,” and “aggressively flexing power.”

The Intercept has long covered authoritarian governments, billionaire oligarchs, and backsliding democracies around the world. We understand the challenge we face in Trump and the vital importance of press freedom in defending democracy.

We’re independent of corporate interests. Will you help us?

IT’S BEEN A DEVASTATING year for journalism — the worst in modern U.S. history.

We have a president with utter contempt for truth aggressively using the government’s full powers to dismantle the free press. Corporate news outlets have cowered, becoming accessories in Trump’s project to create a post-truth America. Right-wing billionaires have pounced, buying up media organizations and rebuilding the information environment to their liking.

In this most perilous moment for democracy, The Intercept is fighting back. But to do so effectively, we need to grow.

That’s where you come in. Will you help us expand our reporting capacity in time to hit the ground running in 2026?

We’re independent of corporate interests. Will you help us?

I’M BEN MUESSIG, The Intercept’s editor-in-chief. It’s been a devastating year for journalism — the worst in modern U.S. history.

We have a president with utter contempt for truth aggressively using the government’s full powers to dismantle the free press. Corporate news outlets have cowered, becoming accessories in Trump’s project to create a post-truth America. Right-wing billionaires have pounced, buying up media organizations and rebuilding the information environment to their liking.

In this most perilous moment for democracy, The Intercept is fighting back. But to do so effectively, we need to grow.

That’s where you come in. Will you help us expand our reporting capacity in time to hit the ground running in 2026?

We’re independent of corporate interests. Will you help us?

Latest Stories

The Intercept Briefing

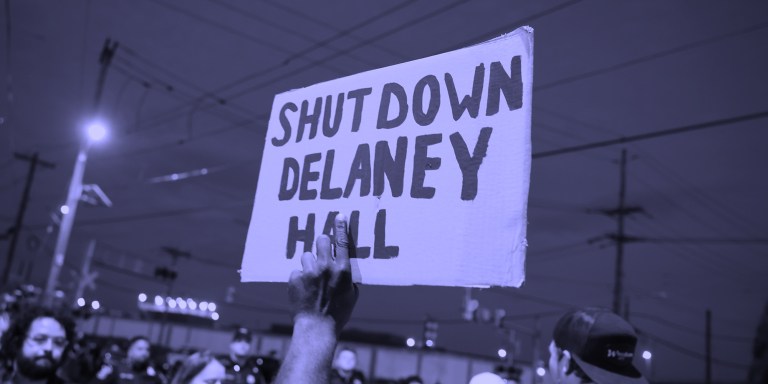

“Warehousing Human Beings”

Former immigration judge Andrea Sáenz and American Immigration Council’s Aaron Reichlin-Melnick on the conditions at Delaney Hall and other ICE detention centers across the U.S.

Trump Administration Tries to Shift Blame for Ebola Response

After cutting its support for frontline healthcare workers in Central Africa, the Trump administration is pointing fingers.

Israel’s Lebanon Blitz

House Dems Coming Around on Iran War — But Won’t Vote to Stop Israel’s Destruction of Lebanon

Though its backers remain optimistic, a bill blocking U.S. support for Israel’s war in Lebanon exposed rifts among Democrats.