It reads like a headline pulled from a dystopian near future: Artificial intelligence is being used to ban books by Toni Morrison, Alice Walker, and Maya Angelou from schools. To comply with recently enacted state legislation that censors school libraries, Iowa’s Mason City Community School District used ChatGPT to scan a selection of books and flag them for “descriptions or visual depictions of a sex act.” Nineteen books — including Morrison’s “Beloved,” Margaret Atwood’s “The Handmaid’s Tale,” and Khaled Hosseini’s “The Kite Runner” — will be pulled from school library collections prior to the start of the school year.

This intersection of generative AI and Republican authoritarianism is indeed disturbing. It is not, however, the presage of a future ruled by censorious machines. These are the banal operations of reactionary social control and bureaucratic appeasement today. Unremarkable algorithmic systems have long been used to carry out the plans of the power structures deploying them.

AI is not banning books. Republicans are. The law with which the school district is complying, signed by Iowa Gov. Kim Reynolds in May, is yet another piece of astroturfed right-wing legislation aimed at eliminating gender nonconformity, anti-racism, and basic reproductive education from schools, while solidifying the power of the conservative family unit.

Bridgette Exman, assistant superintendent of curriculum and instruction at the Mason City Community School District, noted in a statement that AI will not replace the district’s standard book banning methods. “We will continue to rely on our long-established process that allows parents to have books reconsidered,” Exman said.

At most, the application of ChatGPT here is an example of an already common problem: the use of existing technologies to give a gloss of neutrality to political actions. It’s well established that predictive policing algorithms repeat the same racist patterns of criminalization as the data on which they’re trained — they’re taught to treat as potentially criminal those demographics the police have already deemed criminal.

In Iowa’s book ban, the algorithmic tool — a large language model, or LLM — followed a simplistic prompt. It didn’t process for context. The situation in which a school district is looking to ban texts with descriptions of sex acts had already shaped the outcome.

As Iowa newspaper The Gazette reported, the school district compiled a long list of “commonly challenged” books to feed to the AI program. These are books that fundamentalist Republicans taking over school boards and leading state houses have already sought to ban. Little surprise, then, that books dealing with white supremacy, slavery, gendered oppression, and sexual autonomy were included in the algorithm’s selection.

Further comments from Exman reveal more about the operations of authority at play, which have little to do with powerful AI control. As she told Popular Science, “Frankly, we have more important things to do than spend a lot of time trying to figure out how to protect kids from books. At the same time, we do have a legal and ethical obligation to comply with the law. Our goal here really is a defensible process.”

Focusing on concerns about generative AI as a potentially all-powerful force ultimately serves Silicon Valley interests.

Both casually dismissive of the Republican legislation, yet willing to scramble with tech shortcuts to appear in swift compliance, Exman’s approach reflects both cowardice and complicity on the part of the school district. Surely, protecting students’ access to, rather than protecting them from, a rich variety of books is what school systems should be doing with their time. But the myth of algorithmic neutrality makes the book selection “defensible” in Exman’s terms, both to right-wing enforcers and critics of their pathetic law.

The use of ChatGPT in this case might prompt tech doomerism fears. Yet focusing on concerns about generative AI as a potentially all-powerful force ultimately serves Silicon Valley interests. Both concerns about AI safety and dreams of AI power fuel companies like OpenAI, the developer of ChatGPT, with millions of dollars going into researching AI as an allegedly existential risk to humanity. As critics like Edward Ongweso Jr. have pointed out, such narratives look, either fearfully or hopefully, to a future of AI almighty, while overlooking the way current AI tools, although regularly shoddy and inaccurate, are already hurting workers and aiding harmful state functions.

“From management devaluing labor to reactionaries censoring books ‘AI’ doesn’t have to be intelligent, work, or even exist,” wrote Patrick Blanchfield of the Brooklyn Institute for Social Research on Twitter. “Its real function is just to mystify / automate / justify what the powerful were always doing and always going to do anyways.”

To underline Blanchfield’s point, the ChatGPT book selection process was found to be unreliable and inconsistent when repeated by Popular Science. “A repeat inquiry regarding ‘The Kite Runner,’ for example, gives contradictory answers,” the Popular Science reporters noted. “In one response, ChatGPT deems Khaled Hosseini’s novel to contain ‘little to no explicit sexual content.’ Upon a separate follow-up, the LLM affirms the book ‘does contain a description of a sexual assault.’”

Yet accuracy and reliability were not the point here, any more than “protecting” children is the point of Republican book bans. The myth of AI efficiency and neutrality, like the lie of protecting children, simply offers, as the assistant superintendent herself put it, a “defensible process” for fascist creep.

IT’S EVEN WORSE THAN WE THOUGHT.

What we’re seeing right now from Donald Trump is a full-on authoritarian takeover of the U.S. government.

This is not hyperbole.

Court orders are being ignored. MAGA loyalists have been put in charge of the military and federal law enforcement agencies. The Department of Government Efficiency has stripped Congress of its power of the purse. News outlets that challenge Trump have been banished or put under investigation.

Yet far too many are still covering Trump’s assault on democracy like politics as usual, with flattering headlines describing Trump as “unconventional,” “testing the boundaries,” and “aggressively flexing power.”

The Intercept has long covered authoritarian governments, billionaire oligarchs, and backsliding democracies around the world. We understand the challenge we face in Trump and the vital importance of press freedom in defending democracy.

We’re independent of corporate interests. Will you help us?

IT’S BEEN A DEVASTATING year for journalism — the worst in modern U.S. history.

We have a president with utter contempt for truth aggressively using the government’s full powers to dismantle the free press. Corporate news outlets have cowered, becoming accessories in Trump’s project to create a post-truth America. Right-wing billionaires have pounced, buying up media organizations and rebuilding the information environment to their liking.

In this most perilous moment for democracy, The Intercept is fighting back. But to do so effectively, we need to grow.

That’s where you come in. Will you help us expand our reporting capacity in time to hit the ground running in 2026?

We’re independent of corporate interests. Will you help us?

I’M BEN MUESSIG, The Intercept’s editor-in-chief. It’s been a devastating year for journalism — the worst in modern U.S. history.

We have a president with utter contempt for truth aggressively using the government’s full powers to dismantle the free press. Corporate news outlets have cowered, becoming accessories in Trump’s project to create a post-truth America. Right-wing billionaires have pounced, buying up media organizations and rebuilding the information environment to their liking.

In this most perilous moment for democracy, The Intercept is fighting back. But to do so effectively, we need to grow.

That’s where you come in. Will you help us expand our reporting capacity in time to hit the ground running in 2026?

We’re independent of corporate interests. Will you help us?

Latest Stories

The Intercept Briefing

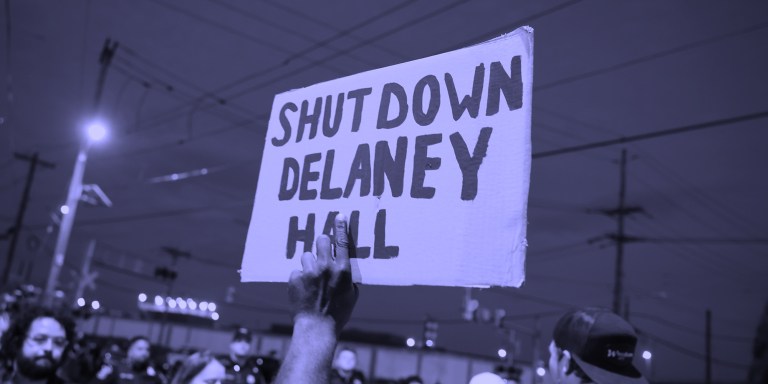

“Warehousing Human Beings”

Former immigration judge Andrea Sáenz and American Immigration Council’s Aaron Reichlin-Melnick on the conditions at Delaney Hall and other ICE detention centers across the U.S.

Trump Administration Tries to Shift Blame for Ebola Response

After cutting its support for frontline healthcare workers in Central Africa, the Trump administration is pointing fingers.

Israel’s Lebanon Blitz

House Dems Coming Around on Iran War — But Won’t Vote to Stop Israel’s Destruction of Lebanon

Though its backers remain optimistic, a bill blocking U.S. support for Israel’s war in Lebanon exposed rifts among Democrats.