Maybe you’ve heard of the new, vibrant philosophy called “longtermism.” It’s beloved by Elon Musk and Peter Thiel and many other Silicon Valley winners who are funding its development and promulgation.

I can’t claim to be the world’s greatest expert on longtermism. However, I have read two articles and almost a dozen tweets about it, and so am qualified to have an opinion on the internet. Here’s what it’s about:

Longtermists believe that Future Lives Matter. And if humanity doesn’t completely obliterate itself, there are going to be lots of us in the eons to come. One longtermist estimate is that “the lower bound of the number of biological human life-years … is 1034 years.”

If I remember the two weeks in sixth grade when we covered exponents, these 10,000,000,000,000,000,000,000,000,000,000,000 future human life years are 10,000,000,000,000,000,000,000 times greater than the puny 1,000,000,000,000 life years of the 8,000,000,000 people currently alive (if we assume we’ll all die at 125).

The natural conclusion here is that if we are morally serious, we must carry out mass slaughter undreamed of by Hitler if there’s even the tiniest chance that it will prevent the extinction of humanity. As one prominent longtermist puts it, “the expected value of reducing existential risk by a mere one millionth of one percentage point is at least ten times the value of a billion human lives” (italics in the original, to show that he truly means it).

Longtermists generally see three key existential risks that could cause humanity to go extinct: artificial intelligence, bioengineered plagues, and an asteroid strike. By contrast, nuclear war might or might not count, and runaway global warming wouldn’t, because at least a few people would survive it and bounce back.

You can see how this perspective would appeal to Musk in particular: If you’re contributing to getting humans off Earth to Mars (and from there across the universe), you should receive a get-out-of-jail-free card for any other deeds, no matter how hideous.

In any case, no one can deny that this kind of thought experiment is fun, especially if you’re super high. On the other hand, the entire edifice of longtermism is built on a foundation that is largely arbitrary and highly disputable, and with other starting points, you can derive pretty much any philosophy you want. So let’s do it! Here are seven other moral codes that all rational people must adopt:

Yum, Zebra-ism

Let’s say there’s a 1 in a quadrillion chance that the human life span will increase by a factor of a quintillion if the only food we eat is living zebras. Is this true? Who knows, but it could be, since this subject has not been fully explored by science. Logically, we must therefore devote all our efforts to breeding enough struggling, shrieking zebras for us to consume as our sole source of nutrition. Yes, we will live our long lives drenched in zebra blood and viscera. But this is the price of seeing the world clearly.

To Serve Man-ism

Conversely, imagine there’s a 1 in a quadrillion chance that there’s an intelligent alien species out there with the potential to breed a quintillion times more prodigiously than us. But they will only be able to do this if they find and eat us. This means we must immediately start broadcasting a beacon out into space with video of all of us happily sitting in a huge soup pot until we are tender and delicious. “Can’t wait to meat you!” we will say, as we point to especially succulent parts of our bodies.

Bees-ism

There are currently more than 2 trillion bees alive on earth. Research shows that bees enjoy being alive 1 septillion times more than humans do. (This research is funded by bees.) A little simple math proves that billions of us must work ourselves to death to enhance the lives of the bees — the glorious, buzzing bees.

Ocean Spray-ism

It is possible that we are living in a simulation. If so, it is also possible that our creator will get bored with us and shut our simulation down. Maybe the only thing that has prevented this from happening so far is the TikTok of that guy drinking Ocean Spray cran-raspberry juice on a skateboard and lip-syncing to “Dreams” by Fleetwood Mac. I know that if I were an adolescent in another dimension who’d bought The Sims: Milky Way with my allowance, I’d wait a little bit to see if humanity comes up with something that entertaining again. Let’s get all our greatest minds on this ASAP before we’re unplugged.

Paperclip-ism

The danger of artificial intelligence is not just that it could be actively hostile toward us; it could destroy us even if it were simply indifferent. The classic example is the Paperclip Maximizer, an AI that concludes that the highest value in the universe is creating paper clips. It might therefore monopolize all resources and devote them to carrying out its mission, leaving us to starve in the dark, surrounded by paper clips. (The most painful irony is that such an AI wouldn’t even produce paper to clip together.) But we must accept that there’s a chance making paper clips actually is the highest value in the universe, and therefore we should build such an AI and see what happens.

Jack Off-ism

There’s also a chance that the greatest joy available in the universe is mental masturbation. Certainly we know there’s literally no belief system humans won’t adopt in order to avoid dealing with the obvious problems right in front of them. We should rearrange all society so the maximum number of us can engage in a frenzy of pointless self-gratification.

Death to Heretics-ism

There are 1034 human life years at risk here. If there’s even a 1 in 1033 chance that the people making jokes about longtermism will prevent its adoption, these jokesters must be hunted down and killed — for the good of our children, and their children after them, and their children’s children’s children’s children’’’’s child’r’e’n’s, etc. (I don’t like this one.)

IT’S EVEN WORSE THAN WE THOUGHT.

What we’re seeing right now from Donald Trump is a full-on authoritarian takeover of the U.S. government.

This is not hyperbole.

Court orders are being ignored. MAGA loyalists have been put in charge of the military and federal law enforcement agencies. The Department of Government Efficiency has stripped Congress of its power of the purse. News outlets that challenge Trump have been banished or put under investigation.

Yet far too many are still covering Trump’s assault on democracy like politics as usual, with flattering headlines describing Trump as “unconventional,” “testing the boundaries,” and “aggressively flexing power.”

The Intercept has long covered authoritarian governments, billionaire oligarchs, and backsliding democracies around the world. We understand the challenge we face in Trump and the vital importance of press freedom in defending democracy.

We’re independent of corporate interests. Will you help us?

IT’S BEEN A DEVASTATING year for journalism — the worst in modern U.S. history.

We have a president with utter contempt for truth aggressively using the government’s full powers to dismantle the free press. Corporate news outlets have cowered, becoming accessories in Trump’s project to create a post-truth America. Right-wing billionaires have pounced, buying up media organizations and rebuilding the information environment to their liking.

In this most perilous moment for democracy, The Intercept is fighting back. But to do so effectively, we need to grow.

That’s where you come in. Will you help us expand our reporting capacity in time to hit the ground running in 2026?

We’re independent of corporate interests. Will you help us?

I’M BEN MUESSIG, The Intercept’s editor-in-chief. It’s been a devastating year for journalism — the worst in modern U.S. history.

We have a president with utter contempt for truth aggressively using the government’s full powers to dismantle the free press. Corporate news outlets have cowered, becoming accessories in Trump’s project to create a post-truth America. Right-wing billionaires have pounced, buying up media organizations and rebuilding the information environment to their liking.

In this most perilous moment for democracy, The Intercept is fighting back. But to do so effectively, we need to grow.

That’s where you come in. Will you help us expand our reporting capacity in time to hit the ground running in 2026?

We’re independent of corporate interests. Will you help us?

Latest Stories

Daughter of 2028 Olympics Chair Dreams of Competing in LA — for Israel

Hollywood scion Casey Wasserman faced criticisms as Los Angeles Olympics chief for his connections to the late pedophile Jeffrey Epstein.

Anthropic Says We Must Stop Authoritarian AI. But What About Its Authoritarian Investors?

Anthropic wants to keep AI away from repressive regimes. But what about its part-owner, the repressive dictatorship of Abu Dhabi?

The Intercept Briefing

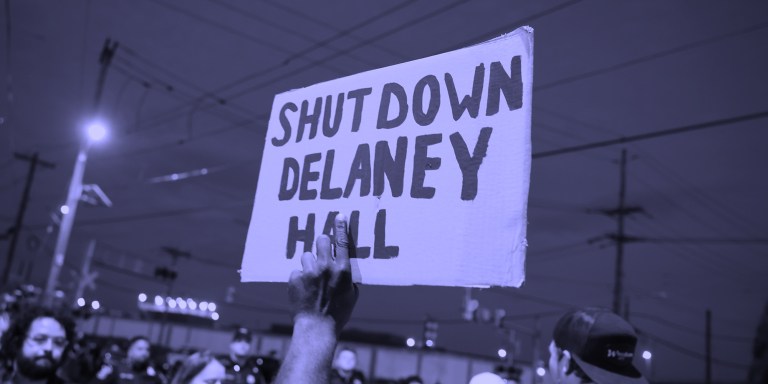

“Warehousing Human Beings”

Former immigration judge Andrea Sáenz and American Immigration Council’s Aaron Reichlin-Melnick on the conditions at Delaney Hall and other ICE detention centers across the U.S.