The social media giant Meta recently updated the rulebook it uses to censor online discussion of people and groups it deems “dangerous,” according to internal materials obtained by The Intercept. The policy had come under fire in the past for casting an overly wide net that ended up removing legitimate, nonviolent content.

The goal of the change is to remove less of this material. In updating the policy, Meta, the parent company of Facebook and Instagram, also made an internal admission that the policy has censored speech beyond what the company intended.

Meta’s “Dangerous Organizations and Individuals,” or DOI, policy is based around a secret blacklist of thousands of people and groups, spanning everything from terrorists and drug cartels to rebel armies and musical acts. For years, the policy prohibited the more than one billion people using Facebook and Instagram from engaging in “praise, support or representation” of anyone on the list.

Now, Meta will provide a greater allowance for discussion of these banned people and groups — so long as it takes place in the context of “social and political discourse,” according to the updated policy, which also replaces the blanket prohibition against “praise” of blacklisted entities with a new ban on “glorification” of them.

The updated policy language has been distributed internally, but Meta has yet to disclose it publicly beyond a mention of the “social and political discourse” exception on the community standards page. Blacklisted people and organizations are still banned from having an official presence on Meta’s platforms.

The revision follows years of criticism of the policy. Last year, a third-party audit commissioned by Meta found the company’s censorship rules systematically violated the human rights of Palestinians by stifling political speech, and singled out the DOI policy. The new changes, however, leave major problems unresolved, experts told The Intercept. The “glorification” adjustment, for instance, is well intentioned but likely to suffer from the same ambiguity that created issues with the “praise” standard.

“Changing the DOI policy is a step in the right direction, one that digital rights defenders and civil society globally have been requesting for a long time,” Mona Shtaya, nonresident fellow at the Tahrir Institute for Middle East Policy, told The Intercept.

Observers like Shtaya have long objected to how the DOI policy has tended to disproportionately censor political discourse in places like Palestine — where discussing a Meta-banned organization like Hamas is unavoidable — in contrast to how Meta rapidly adjusted its rules to allow praise of the Ukrainian Azov Battalion despite its neo-Nazi sympathies.

“The recent edits illustrate that Meta acknowledges the participation of certain DOI members in elections,” Shtaya said. “However, it still bars them from its platforms, which can significantly impact political discourse in these countries and potentially hinder citizens’ equal and free interaction with various political campaigns.”

Acknowledged Failings

Meta has long maintained the original DOI policy is intended to curtail the ability of terrorists and other violent extremists from causing real-world harm. Content moderation scholars and free expression advocates, however, maintain that the way the policy operates in practice creates a tendency to indiscriminately swallow up and delete entirely nonviolent speech. (Meta declined to comment for this story.)

In the new internal language, Meta acknowledged the failings of its rigid approach and said the company is attempting to improve the rule. “A catch-all policy approach helped us remove any praise of designated entities and individuals on the platform,” read an internal memo announcing the change. “However, this approach also removes social and political discourse and causes enforcement challenges.”

Meta’s proposed solution is “recategorizing the definition of ‘Praise’ into two areas: ‘References to a DOI,’ and ‘Glorification of DOIs.’ These fundamentally different types of content should be treated differently.” Mere “references” to a terrorist group or cartel kingpin will be permitted so long as they fall into one of 11 new categories of discourse Meta deems acceptable:

Elections, Parliamentary and executive functions, Peace and Conflict Resolution (truce/ceasefire/peace agreements), International agreements or treaties, Disaster response and humanitarian relief, Human Rights and humanitarian discourse, Local community services, Neutral and informative descriptions of DOI activity or behavior, News reporting, Condemnation and criticism, Satire and humor.

Posters will still face strict requirements to avoid running afoul of the policy, even if they’re attempting to participate in one of the above categories. To stay online, any Facebook or Instagram posts mentioning banned groups and people must “explicitly mention” one of the permissible contexts or face deletion. The memo says “the onus is on the user to prove” that they’re fitting into one of the 11 acceptable categories.

According to Shtaya, the Tahrir Institute fellow, the revised approach continues to put Meta’s users at the mercy of a deeply flawed system. She said, “Meta’s approach places the burden of content moderation on its users, who are neither language experts nor historians.”

Unclear Guidance

Instagram and Facebook users will still have to hope their words aren’t interpreted by Meta’s outsourced legion of overworked, poorly paid moderators as “glorification.” The term is defined internally in almost exactly the same language as its predecessor, “praise”: “Legitimizing or defending violent or hateful acts by claiming that those acts or any type of harm resulting from them have a moral, political, logical, or other justification that makes them appear acceptable or reasonable.” Another section defines glorification as any content that “justifies or amplifies” the “hateful or violent” beliefs or actions of a banned entity, or describes them as “effective, legitimate or defensible.”

Though Meta intends this language to be universal, equitably and accurately applying labels as subjective as “legitimate” or “hateful” to the entirety of global online discourse has proven impossible to date.

“Replacing ‘praise’ with ‘glorification’ does little to change the vagueness inherent to each term,” according to Ángel Díaz, a professor at University of Southern California’s Gould School of Law and a scholar of social media content policy. “The policy still overburdens legitimate discourse.”

“Replacing ‘praise’ with ‘glorification’ does little to change the vagueness inherent to each term. The policy still overburdens legitimate discourse.”

The notions of “legitimization” or “justification” are deeply complex, philosophical matters that would be difficult to address by anyone, let alone a contractor responsible for making hundreds of judgments each day.

The revision does little to address the heavily racialized way in which Meta assesses and attempts to thwart dangerous groups, Díaz added. While the company still refuses to disclose the blacklist or how entries are added to it, The Intercept published a full copy in 2021. The document revealed that the overwhelming majority of the “Tier 1” dangerous people and groups — who are still subject to the harshest speech restrictions under the new policy — are Muslim, Arab, or South Asian. White, American militant groups, meanwhile, are overrepresented in the far more lenient “Tier 3” category.

Díaz said, “Tier 3 groups, which appear to be largely made up of right-wing militia groups or conspiracy networks like QAnon, are not subject to bans on glorification.”

Meta’s own internal rulebook seems unclear about how enforcement is supposed to work, seemingly still dogged by the same inconsistencies and self-contradictions that have muddled its implementation for years.

For instance, the rule permits “analysis and commentary” about a banned group, but a hypothetical post arguing that the September 11 attacks would not have happened absent U.S. aggression abroad is considered a form of glorification, presumably of Al Qaeda, and should be deleted, according to one example provided in the policy materials. Though one might vehemently disagree with that premise, it’s difficult to claim it’s not a form of analysis and commentary.

Another hypothetical post in the internal language says, in response to Taliban territorial gains in the Afghanistan war, “I think it’s time the U.S. government started reassessing their strategy in Afghanistan.” The post, the rule says, should be labeled as nonviolating, despite what appears to be a clear-cut characterization of the banned group’s actions as “effective.”

David Greene, civil liberties director at the Electronic Frontier Foundation, told The Intercept these examples illustrate how difficult it will be to consistently enforce the new policy. “They run through a ton of scenarios,” Greene said, “but for me it’s hard to see a through-line in them that indicates generally applicable principles.”

IT’S EVEN WORSE THAN WE THOUGHT.

What we’re seeing right now from Donald Trump is a full-on authoritarian takeover of the U.S. government.

This is not hyperbole.

Court orders are being ignored. MAGA loyalists have been put in charge of the military and federal law enforcement agencies. The Department of Government Efficiency has stripped Congress of its power of the purse. News outlets that challenge Trump have been banished or put under investigation.

Yet far too many are still covering Trump’s assault on democracy like politics as usual, with flattering headlines describing Trump as “unconventional,” “testing the boundaries,” and “aggressively flexing power.”

The Intercept has long covered authoritarian governments, billionaire oligarchs, and backsliding democracies around the world. We understand the challenge we face in Trump and the vital importance of press freedom in defending democracy.

We’re independent of corporate interests. Will you help us?

IT’S BEEN A DEVASTATING year for journalism — the worst in modern U.S. history.

We have a president with utter contempt for truth aggressively using the government’s full powers to dismantle the free press. Corporate news outlets have cowered, becoming accessories in Trump’s project to create a post-truth America. Right-wing billionaires have pounced, buying up media organizations and rebuilding the information environment to their liking.

In this most perilous moment for democracy, The Intercept is fighting back. But to do so effectively, we need to grow.

That’s where you come in. Will you help us expand our reporting capacity in time to hit the ground running in 2026?

We’re independent of corporate interests. Will you help us?

I’M BEN MUESSIG, The Intercept’s editor-in-chief. It’s been a devastating year for journalism — the worst in modern U.S. history.

We have a president with utter contempt for truth aggressively using the government’s full powers to dismantle the free press. Corporate news outlets have cowered, becoming accessories in Trump’s project to create a post-truth America. Right-wing billionaires have pounced, buying up media organizations and rebuilding the information environment to their liking.

In this most perilous moment for democracy, The Intercept is fighting back. But to do so effectively, we need to grow.

That’s where you come in. Will you help us expand our reporting capacity in time to hit the ground running in 2026?

We’re independent of corporate interests. Will you help us?

Latest Stories

The Intercept Briefing

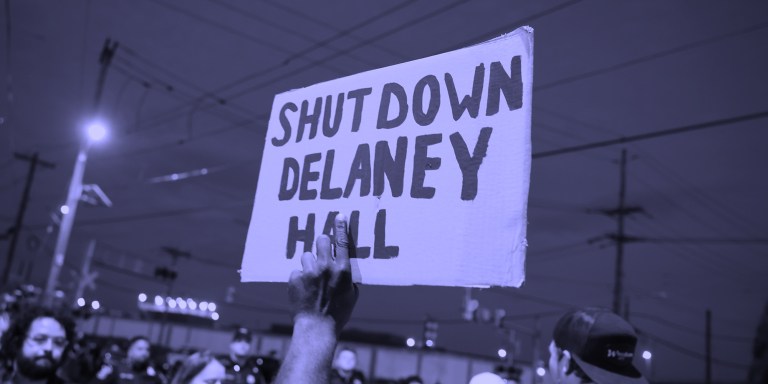

“Warehousing Human Beings”

Former immigration judge Andrea Sáenz and American Immigration Council’s Aaron Reichlin-Melnick on the conditions at Delaney Hall and other ICE detention centers across the U.S.

Trump Administration Tries to Shift Blame for Ebola Response

After cutting its support for frontline healthcare workers in Central Africa, the Trump administration is pointing fingers.

Israel’s Lebanon Blitz

House Dems Coming Around on Iran War — But Won’t Vote to Stop Israel’s Destruction of Lebanon

Though its backers remain optimistic, a bill blocking U.S. support for Israel’s war in Lebanon exposed rifts among Democrats.